A routine phone notification turned into an unexpected shock for thousands of users — and within minutes, screenshots were spreading across social media. What was meant to be a simple news alert instead carried an offensive racial slur, triggering confusion, anger, and urgent questions about how one of the world’s biggest tech companies allowed it to happen.

The incident forced Google to issue a public apology and opened a wider conversation about automation, content moderation, and the risks of algorithm-driven news delivery in today’s fast-moving digital world.

A Notification That Caught Users Off Guard

The controversy began when some smartphone users received a push notification from Google’s news service summarizing a trending entertainment story. Instead of presenting a carefully filtered headline, the alert displayed a racial slur in full, visible directly on users’ lock screens.

Because notifications appear instantly and publicly — often without context — many recipients were stunned. Screenshots quickly circulated online, and criticism followed almost immediately.

For many users, the question wasn’t just why the word appeared, but how a company known for advanced technology and strict safety systems could allow such a mistake to reach people’s personal devices.

The Incident Behind the News Story

The notification was tied to reporting about an incident during the 2026 BAFTA Film Awards ceremony. During the broadcast, an audience member living with Tourette syndrome involuntarily shouted a racial slur while actors were presenting on stage.

News organizations covered the moment as part of broader reporting on the awards show and discussions about live broadcast delays and disability awareness.

However, when Google’s automated system generated a summary notification based on online articles, the platform failed to censor the sensitive term. Instead, the word appeared directly in the notification text — stripped of context and safeguards.

That single technical failure transformed a news update into a viral controversy.

Google’s Apology and Explanation

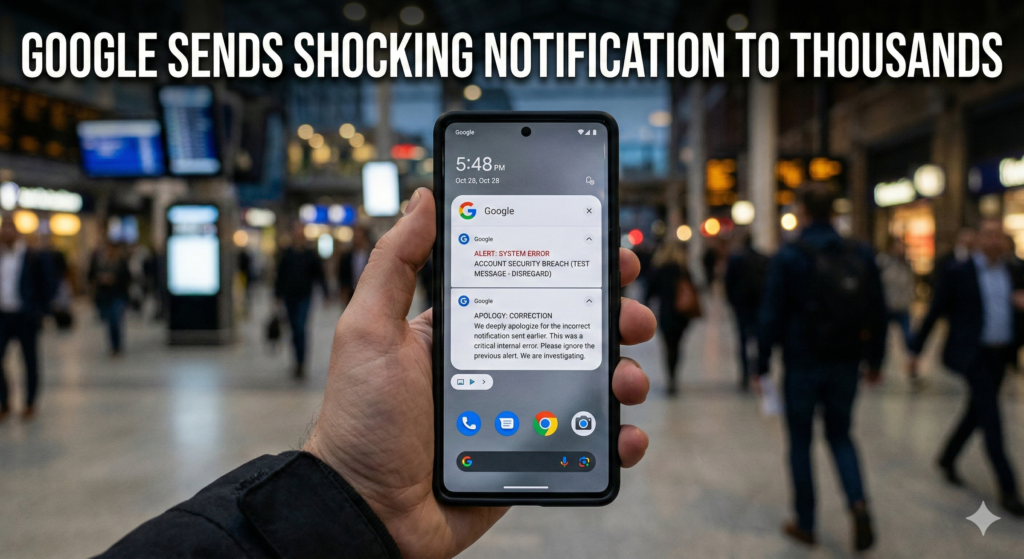

Soon after the alerts began circulating online, Google issued a statement apologizing for the mistake. The company described the notification as the result of a technical malfunction rather than intentional messaging.

According to Google, the issue occurred within automated systems designed to summarize news content and deliver breaking alerts to users. Safety filters that normally block offensive or harmful language did not function as intended.

Importantly, the company clarified that generative artificial intelligence was not responsible for the error. Instead, it stemmed from automated content-processing tools that failed to properly recognize and censor sensitive wording.

Google said only a limited number of users received the alert and confirmed the notification was removed quickly once the issue was discovered.

How Automated News Alerts Actually Work

To understand why this happened, it helps to look at how modern news notifications operate.

Platforms like Google News rely heavily on automation. Algorithms scan thousands of articles every minute, identify trending topics, and generate short summaries designed to inform users quickly.

These systems prioritize speed and relevance. When major stories begin trending, automated tools extract keywords and phrases to create concise alerts suitable for mobile devices.

But automation comes with risks.

Unlike human editors, algorithms struggle with nuance — especially when dealing with sensitive language used in reporting contexts. Words included for journalistic accuracy can appear harmful when removed from surrounding explanation.

Experts say this is one of the biggest challenges facing tech companies today: balancing rapid information delivery with responsible moderation.

Why Safety Filters Failed

Content moderation systems typically rely on layers of filters that detect harmful words and either censor or replace them. In this case, those safeguards did not activate properly.

Technology analysts suggest the system likely misinterpreted how the word appeared in source articles. Because journalists were reporting on an incident rather than using the language directly, the software may have categorized the text as informational rather than offensive.

When the notification generator condensed the story into a short alert, context was lost — and the slur slipped through uncensored.

This highlights a well-known limitation in automated moderation: machines can identify patterns, but understanding intent remains far more complicated.

The Role of Context in Digital Communication

One reason the incident caused such strong reactions is the unique nature of push notifications.

Unlike articles or social media posts, notifications appear without warning. Users cannot preview or choose the wording before it shows up on their screen.

That makes context especially important — and mistakes more visible.

Media experts note that language acceptable within a detailed news report can become harmful when presented alone. A single sentence, stripped of explanation, can change meaning entirely.

In this case, many recipients saw only the offensive word, not the story explaining why it appeared in the first place.

Growing Concerns About Automation in Big Tech

The episode arrives at a time when technology companies are increasingly relying on automation to manage enormous volumes of content.

From news recommendations to search summaries and personalized alerts, algorithms now play a central role in how people receive information.

While automation improves efficiency and personalization, it also introduces new vulnerabilities. A small technical error can instantly affect millions of users worldwide.

Digital ethics researchers say incidents like this demonstrate the importance of maintaining human oversight alongside automated systems.

Even advanced technology still requires careful monitoring when dealing with sensitive cultural and social issues.

Disability Awareness and Public Reaction

Another layer of complexity surrounding the story involves the original awards-show incident itself.

Advocacy groups emphasized that the outburst during the ceremony was linked to Tourette syndrome, a neurological condition that can cause involuntary vocal tics. Many advocates urged the public to approach the situation with understanding rather than blame.

However, once the word appeared in Google’s notification, the conversation shifted away from awareness and toward technology accountability.

The incident became less about the broadcast moment and more about how digital platforms handle sensitive material.

What Google Says It Will Change

Following the backlash, Google confirmed it is reviewing how notification text is generated and strengthening safeguards designed to prevent offensive language from appearing in alerts.

The company plans to refine filtering systems and introduce additional checks to ensure sensitive terms cannot bypass moderation layers in the future.

Industry observers expect tech platforms to invest more heavily in hybrid moderation models — combining automated detection with human review for high-risk content categories.

Such changes could slow down notification delivery slightly, but experts say accuracy and responsibility are becoming more important than speed alone.

Why This Story Matters to Readers Today

At first glance, the incident may appear to be a simple technical error. But it reflects a broader shift in how people consume news.

Millions now rely on automated alerts rather than visiting news websites directly. Smartphones decide which stories users see first, shaping public awareness in real time.

When those systems fail, the impact is immediate and personal.

The controversy also raises important questions:

- How much trust should users place in automated platforms?

- Can algorithms truly understand cultural sensitivity?

- And how should technology companies balance speed with responsibility?

As automation continues expanding across digital services, these questions will only become more relevant.

The Bigger Lesson for the Tech Industry

Technology companies have spent years improving artificial intelligence and automation, promising smarter and faster information delivery. But incidents like this remind the industry that technological progress must be matched by ethical safeguards.

Automation can process data at incredible scale, but human judgment still plays a crucial role when language, culture, and social sensitivity are involved.

Experts argue that the future of digital platforms will depend not only on innovation but also on accountability — ensuring technology enhances communication rather than unintentionally causing harm.

Conclusion: A Small Error With a Big Reminder

Google’s apology may close this specific chapter, but the broader conversation is far from over.

A single notification revealed how fragile automated systems can be when context disappears. What began as routine news delivery quickly became a global discussion about responsibility in the age of algorithms.

For users, the incident serves as a reminder that technology — no matter how advanced — is not flawless. And for tech companies, it underscores an increasingly clear lesson: speed and scale must never come at the expense of sensitivity and trust.

As digital platforms continue shaping how the world receives information, ensuring accuracy, context, and respect may prove just as important as delivering news instantly.

Related Article

Frozen Blueberry Recall Shock: Why Officials Say This Contamination Is Especially Dangerous

What Really Happened During Hillary Clinton’s Deposition? The Full Story Explained

Kelly Clarkson Finally Reveals the Real Reason She Walked Away From Her Talk Show

Marathon’s Rewards System Is Changing Multiplayer Gaming — And Players Didn’t See This Coming